Samba implements many things, but in this case we look at Samba as an Active Directory DC.

In that role, Samba now has a quite credible implementation of the required protocols, and so is deployed in education, governments, large corporations and small businesses around the world.

One of the great things about our Samba users is the scale they are willing to push Samba to. With Samba now well established as a reliable solution, our enthusiastic users are now deploying it at larger and larger scales, and rightly expect that it continues to 'just work'.

The Samba team at Catalyst has been working to keep Samba performing to those expectations, and this blog will look at what we have achieved for the Samba 4.5 release.

It all started with two issues raised by our clients, which turned out to dovetail really well:

- Samba replication performance with a large number of linked attributes

- Samba runtime add/remove performance with linked attributes.

This post will examine how we addressed the first issue, in replication.

First, we worked to reproduce the situation found by the client: a domain with a large number of users, especially users in groups. Due to the second issue especially, reproducing the situation took a long time. We pre-created such a database for re-use.

Next the replication operation was run under 'perf record'. The linux perf/perf_events tools from the Main Page are a low overhead, sample-based profiling system. The system allows us to explore statistically, where our program time is spent.

Replicating the problem statement from the client, our domain has a larger number of group members, and these memberships are described as linked attributes in AD. Under perf record, we ran 'samba-tool drs clone-dc-database' to emulate replication without making any

modifications to the domain.

To determine where the time is being spent, the output was then visualised with the FlameGraph tool by Brendan Gregg.

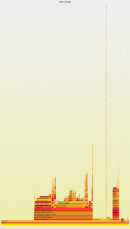

As you can see in this first image, Samba's handling of these is suboptimal in two ways:

As you can see in this first image, Samba's handling of these is suboptimal in two ways:

- A number of the processing steps are clearly being done in a tight loop (as GUID_compare() and ldb_val_equal_exact() are very fast routines when run only once.

- The processing is done in ldb_transaction_commit, so this means it is with the database transaction lock held. In this case no other process can make writes to the database while this processing is being undertaken.

One of the most helpful things about flame graphs is they are dynamic: they include JavaScript, and users can drill down into them, and use them as a map to investigate the source code. We can follow from replmd_prepare_commit() down to ltdb_modify_internal(), and see the real problem:

Helpfully, described in the comment as O(n²), we need to avoid doing this processing. In this case the solution was not a change to a better algorithm such as a merge sort, but to avoid the check entirely!

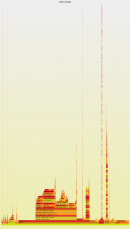

The difference this has made is quite clear as seen in the second flame graph (right). An entire tongue of flame has just vanished. This graph also differs in that we changed the trace from being kernel-wide to just the client process, once it became clear that the CPU time is only problematic in the client.

The difference this has made is quite clear as seen in the second flame graph (right). An entire tongue of flame has just vanished. This graph also differs in that we changed the trace from being kernel-wide to just the client process, once it became clear that the CPU time is only problematic in the client.

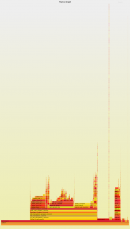

We attacked the GUID_compare() stack next, with a simple change to apply the linked attributes earlier during the replication, so as to avoid building and so traversing a long list. Again, the results are quite dramatic.

However, there is still a problem: get_parsed_dns() is being called way too often, and it spends almost as much time cleaning up the memory allocated as doing actual work.

While this is certainly worth some attention, we left this particular line of attack, and instead focused on why the underlying routines are so expensive. This matters because while clearly overused, these routines are also used incredibly often in the rest of the codebase. We focused on why the NDR parsing of GUIDs and SIDs. Both carried an incredibly high load for memory allocation compared to the work done, and so we added routines that perform NDR parsing without memory allocation.

We also examined why talloc calls, and particularly talloc_free() calls are so expensive, and talloc 2.1.8 contains these improvements.

After all this work, for this particular example, we can now do replication in around half the time previously taken!